Code coverage and automated checks: Is 100% coverage enough?

Code coverage doesn't mean risk coverage...

Questions on code coverage

Recently TJ Maher (@tjmaher1) posted some great questions in his article "Are unit tests and 100% code coverage enough?". Questions that we should be asking ourselves in regards to Continuous Development and DevOps. There was one question that jumped out at me. And it’s a question I find myself challenging a lot when it comes to automated checking.

‘Do you think 100% code coverage of unit tests and integration tests is enough in environments using Continuous Deployment? What do you use as supplements in your testing efforts?’

The discussion and pursuit of 100% code coverage with automated checks are nothing new. But, as continuous deployment’s goal is to enable teams to release regular, small releases and adopting a testing first culture. And Automated checks have taken on a more vital role. It’s important to scrutinise automated checks more and ask:

What is the role of automated checks in helping us understand what sort of product we are actually releasing? What are the weaknesses of automated checks?

Is 100% Code Coverage enough?

When I think about code coverage I’m reminded of Conway’s game of life. The Game of life, created by John Horton Conway, consists of four rules. These rules apply to an infinite two-dimensional grid, in which cells can be set as ‘alive’ or ‘dead’. The Game of Life’s four rules are:

- Any live cell with fewer than two live neighbours dies as if caused by underpopulation.

- Any live cell with two or three live neighbours lives on to the next generation.

- Any live cell with more than three live neighbours dies, as if by overpopulation.

- Any dead cell with exactly three live neighbours becomes a live cell, as if by reproduction.

You can find a more in-depth explanation of the Game of Life here. However, the point I am making is that The Game of Life at first glance looks to be a simple set of rules.

So let’s imagine we are creating a product that follows these four rules. Each rule has clear criteria that we could create an automated check for, to confirm whether the rule is correct. With all four rules automated we have 100% code coverage and assuming our checks pass we are happy to release. Right?

Challenging our assumptions of the product

At the point of those automated checks passing, what do we know about them? We know that each rule passes our programmed expectation, but what else do we know? Consider how an end-user might use or consume the program. There are many versions of The Game of Life that you can try out online. What do you notice? The Game of Life offers many different outcomes. Depending on what state you put your game in before you begin, leading to all sorts of possibilities. Does 100% code coverage inform us of all these different end results?

Now let’s consider different contexts for our product and the risks that might affect them:

- Imagine if the Game of Life was being used in a video game to create bots? How might that initial state affect the game?

- What if it was being used to create visualisations in a club? Would it create visuals that enhance the space? How would it avoid creating patterns that might be undesirable?

- If we left our product to run for days, weeks, years to create mathematical models, what would happen? What if we filled the grid with many live cells? Would it have an effect on the performance and stability of whatever was running it?

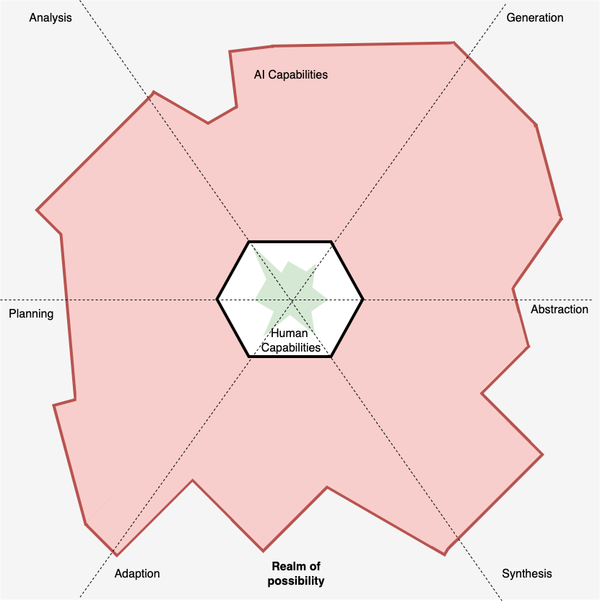

These are hypothetical situations, but whatever your product is it is vulnerable to many, many risks. It shows us the importance of risks such as environmental or the desires of end users. Something that automation cannot inform us about. 100% code coverage is unable to tell us everything about our product, so what’s the value in automation? Are there other testing activities we need?

Knowing the limits of automation

Automation is useful but has limitations. 100% Code coverage cannot give us a full picture, but it can support testing and encourage testing activities. We can use automation to detect changes in our product to trigger other testing activities.

So is 100% code coverage enough? My opinion is a big fat NO. But, the more code coverage we have the better we can react to the unexpected changes in the system. Tester’s can use their skills and knowledge of the product to support Developers with code coverage. But we can also use our Testing skills in other phases of the development lifecycle.

What might these other testing activities look like? Here are a few recommendations that have some great answers:

- The release of ‘Testing in Devops’ by Katrina Clokie

- Rob Meaney and Gus Evangelisti talks from TestBash Belfast (Requires subscription access).

- Dan Ashby’s blog post on Continuous Testing in DevOps